When you update a value that's in TOAST, it always duplicates the whole value.

It was designed for atomic data type, it knows nothing about the internal structure of these types. The one slide that I wanted to highlight is where they show the way that TOAST storage works: if you update something, if you update a value in a row that exceeds that two kilobyte limit and is stored in TOAST, TOAST was not designed for updating values and particularly it wasn't designed for updating a small part of a large value. Sometimes people don't see the point, but they really think that JSONB is something that should behave well.

I'll skip over most of it here, but suffice to say that they agree that JSONB is important. There is a lot of in-depth understanding in this talk. Oleg and Nikita have worked on JSONB itself. This is one of the best summaries of how JSONB works and how JSONB performance works that I've seen over the years. I would encourage you to look at this presentation if you have too much time to spare. There was a really good talk late last year at PGConf New York by Oleg and Nikita from Postgres Pro. Now last but not least: there are people that are looking at this and they're trying to optimize this type of performance problems. The reason that TOAST is a thing is because things just don't fit in those eight kilobytes.

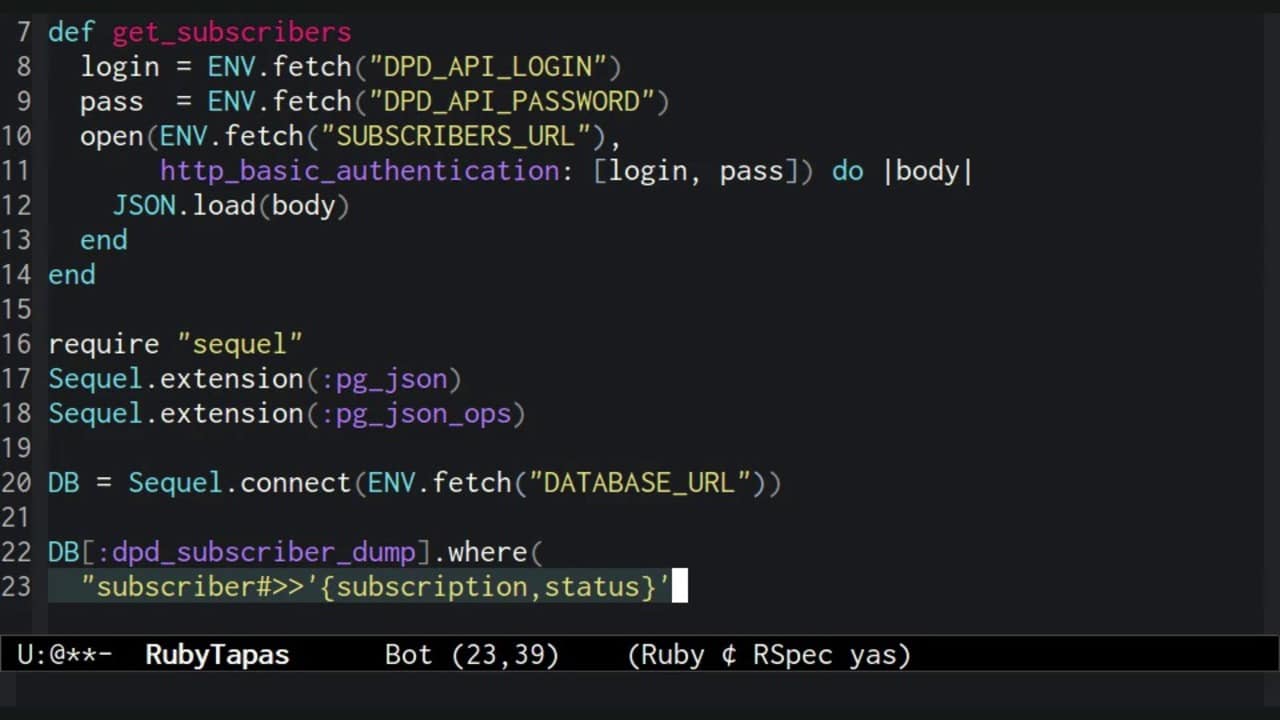

Postgres has an eight kilobyte place to put things. Halfway through the article, you can find a very helpful diagram. If you want to read more about how Postgres' physical structure works there's a good blog post that was written a few years ago that tries to summarize how Postgres does this. The thing to know is if you have large values that exceed that two kilobyte limit, then JSONB performance can be bad. In their test here, they saw that once you hit the TOAST storage, either TOAST uncompressed or TOAST compressed, JSONB does perform pretty bad. As noted here, this does cause performance of queries to get substantially worse. TOAST is a generic table of sorts that stores chunks of the actual values in the main table and stores those out of band in a separate mechanism. Instead of just adding pages into the main table itself, which would cause other problems, Postgres uses a separate mechanism, called TOAST or "The oversized attribute storage technique". If that doesn't work, Postgres has to figure out where it stores that data. The compression tries to fit it inside that page. The first thing that Postgres does when something exceeds the two kilobytes is to try to compress the data. That means because Postgres tries to optimize minimum number of tuples per page that each tuple has a maximum size of two kilobytes. Each page can contain a minimum of four tuples. So in practice, most people that use Postgres, even if it's on their own VM, operate on an eight kilobyte page size. Whilst you can customize this page size, that does require a recompile of Postgres. The important thing to note is that Postgres stores data in eight kilobyte pages. In the post, they go into details of how Postgres actually stores large values. They're comparing this against hstore, which is an older way of storing key value pairs in Postgres, as well as regular JSON, which is essentially a text datatype. This blog post by Evan Jones is an analysis of how JSONB storage behaves. Now, for those of you who don't know, JSONB is a binary version of the general JSON datatype in Postgres that was added in Postgres Version 9.4. Let's dive right in! How Postgres stores data: Large JSON value query performance What we have discussed in this episode of 5mins of Postgres How Postgres stores data: Large JSON value query performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed